In the era of large amounts of data, it has become one of the most valuable assets. Its loss or damage can lead to serious consequences for organizations, so none of them can afford it. Ensuring data security and integrity has become a key task for every organization. One technique that helps in data protection is redundancy.

Numerous individuals inquire about the concept of data redundancy, wondering, “What is data redundancy?” Data redundancy occurs when an organization upholds identical information across various locations concurrently. Data redundancy also as a strategic approach, extends beyond mere duplication; it encompasses a blend of tools, methodologies, and infrastructure planning aimed at guaranteeing continual accessibility and integrity of data.Types of data redundancyThe following types of data redundancy are distinguished:

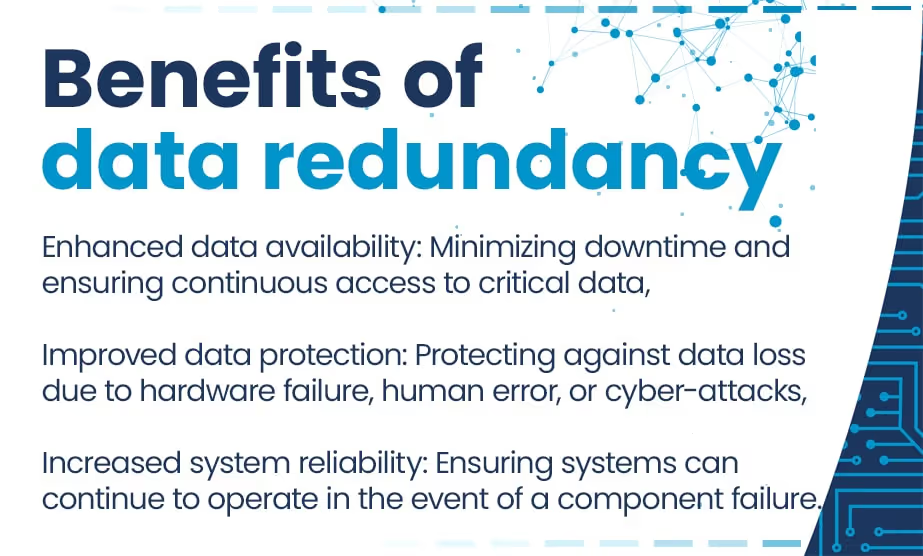

Redundancy plays a vital role in thwarting master data loss and these more processed. It furnishes an additional shield against system breakdowns and data mishaps.Main advantages of intentional data redundancy:

Depending on the complexity of IT systems, there are many different approaches to this issue, each with its advantages and applications. One of the most common strategies is to create regular backups. This redundancy refers to the practice that data are duplicated and stored in a distinct location, such as an external hard drive or a cloud storage platform. Another way is clustering. It involves grouping multiple computers or servers into a single logical system that functions as a unified entity. In the event of a failure of one cluster element, other nodes can take over its tasks, ensuring system continuity. Clustering is employed in highly available systems where minimizing downtime is critical.RAIDInTechHouse knows also many other ways of data redundancy. One of them is RAID (Redundant Array of Independent Disks) which is a prevalent and efficient technique used to enhance performance and reliability. It comprises various setups known as RAID levels:RAID 0: maximizes performance but lacks redundancy, suitable for non-critical applications,RAID 1: entails duplicating the data onto two disks to create a mirrored image. While it offers robust data redundancy, the storage capacity remains constrained,RAID 5: data in multiple locations are distributed across multiple disks, with parity data created alongside. This setup ensures good data redundancy while accommodating a larger storage capacity.Various RAID levels such as RAID 6 and RAID 10: offer different levels of data redundancy. Some incorporate dual parity, while others blend mirroring and striping for enhanced fault tolerance.

Another strategy is data replication. It encompasses the process of generating duplicates of data and storing them across various servers or locations. This practice leads to the existence of numerous identical copies of data dispersed across diverse locations.It’s possible to restore lost or damaged data by:Log-based Incremental Replication: retaining transaction logs and replication mechanism can leverage these logs to detect alterations in the primary data source and subsequently mirror these modifications in the replica destination,Key-based Incremental Replication: entails duplicating data sets by employing a designated replication key,Full Table Replication: in contrast to incremental data replication this method duplicates the entirety of the database,Snapshot Replication: revolves around capturing a snapshot of the source data and then reproducing that exact dataset in the replicas, preserving the state of the data at the moment of the snapshot,Transactional Replication: commences by replicating all pre-existing data from the publisher (source) to the subscriber (replica) and after that any subsequent modifications made at the publisher are promptly and sequentially replicated in the subscriber,Merge Replication: integrates two or more databases into a unified entity, ensuring that updates made to the primary database are mirrored in the secondary databases,Bidirectional Replication: serves as a subset of transactional replication enabling two databases to interchange updates.

Utilizing cloud storage and data replication services is a pivotal element in ensuring data redundancy off-site and high availability. Cloud services enable storing data copies in remote locations, thereby enhancing resilience to failures and ensuring system continuity. With data replication in the cloud, organizations can rest assured that their data stored in several sources is secure and accessible even in the event of local technical issues. This flexible and scalable infrastructure allows for effective management of data redundancy, resulting in increased reliability and service availability.

Although redundancy serves as an efficient method to avert data breach and loss, it does possess its constraints. Each business must identify which data are neuralgic and take into consideration the complexity of the chosen solution, data integration, prevention risk and chance of data corruption and elaboration of sufficient disaster recovery plan in effective database management.

Data synchronization

InTechHouse realizes that addressing the challenges and considerations in data redundancy within databases involves also implementing effective data synchronization to counteract inconsistency. This process refers to the continuous synchronization of data across multiple devices, ensuring automatic updating of changes between them to uphold consistency within systems. Several methods of data synchronization exist, each serving distinct purposes. Version control and file synchronization tools facilitate simultaneous changes to multiple copies of the same data and file. Distributed and mirror synchronization methods offer more specialized functions.

Cost implications

When contemplating the integration of reliable data redundancy, it’s vital to balance the incurred costs with the looming risks of data loss. While redundancy may demand investments in supplementary storage, infrastructure, and administrative resources, they stand as pivotal shields against the potentially devastating aftermath of data loss. Absent redundancy measures, organizations are vulnerable to various perils such as cyberattacks, hardware malfunctions, natural calamities, and unexpected contingencies, all posing threats to data integrity and accessibility. Hence, prioritizing the adoption of resilient redundancy solutions emerges as a necessity for enterprises committed to mitigating these hazards and upholding seamless operations alongside data reliability.

Data storage demands

Redundant data imposes a heavier burden on storage capacity, resulting in escalated storage expenses and potential performance degradation. For instance, within a product inventory database, duplicating supplier information for each supplied product inflates storage requirements. Adopting a normalized approach, where supplier details are stored in a separate table, mitigates redundancy and minimizes storage demands.

Thanks to years of experience, InTechHouse knows perfectly that for effective implementation of redundancy measures, organizations should consider the following steps:

These examples underscore the importance of data redundancy as a key component of reliability and continuity strategies across various sectors of the economy, highlighting the need for investment in advanced technological solutions to minimize the risk of data loss and ensure high the availability of services for users. In this way used data and other versions of data are safe.

Undoubtedly, the predominant data redundancy and storage trend anticipated for 2024 revolves around the integration of AI into its management practices. Administrators are poised to leverage AI more extensively for activities ranging from storage provisioning and capacity planning to backups and data protection, including predictive failure analysis. Additionally, AI may prove beneficial in facilitating workload migrations.Several years ago, cloud services emerged as the frontrunner for the future of data redundancy, prompting most organizations to embrace a cloud-first approach to their IT operations. However, in recent years, there has been a noticeable shift in this trend, with cloud repatriation becoming increasingly prevalent.Cost emerges as a primary driving force behind this shift, as more organizations come to terms with the actual expenses associated with storing large datasets in the cloud. Additionally, factors such as compliance mandates, performance concerns, and the need for greater operational flexibility have prompted some organizations to bring their data back in-house.

Data redundancy can occur as a crucial element in preventing data loss by ensuring data availability without compromising data quality, even if one or more copies of data are compromised. It enhances data accessibility and availability, vital for uninterrupted operations. Employing redundancy requires adherence to best practices and selecting the appropriate redundancy type tailored to the business requirements. Through this approach, organizations safeguard their essential data and applications, ensuring constant availability whenever necessary.The InTechHouse team is able to facilitate the identification of data redundancy- its present level and help select the apprepriate solutions tailored to the specifics of your industry, its size, and needs. We invite you to consult with us. During the consultation, we will discuss all aspects to protect data and uninterrupted operation for your company.

What factors should organizations consider when designing a data redundancy strategy?Organizations should consider factors such as the criticality of a piece of data, regulatory requirements, budget constraints, performance needs, scalability, and the complexity of managing redundant systems.What are the potential challenges associated with implementing data redundancy?Data redundancy may include increased storage of the same data costs, complexity in managing redundant systems, data consistency issues, and the need for robust monitoring and maintenance practices.How can organizations ensure data consistency across redundant copy of the data?Organizations can implement techniques such as data synchronization, checksums, and transactional integrity mechanisms to ensure consistency across redundant copies of data.What are some common methods used to achieve data redundancy?Common methods include RAID (Redundant Array of Independent Disks), data replication, backups, clustering and data replication.How often should organizations review and update their data redundancy strategy?Organizations should regularly review and update their data redundancy strategy to accommodate changes in technology, business requirements, and regulatory standards. Regular testing and validation are also essential to ensure the effectiveness of redundancy measures.

A technology leader specializing in advanced hardware, embedded systems, and AI solutions.

He bridges deep engineering expertise with strategic thinking, helping transform complex system architectures into practical technologies used across industries such as aerospace, defense, telecommunications, and industrial IoT.

With a strong engineering background and ongoing PhD research, he combines academic insight with real-world project experience. Jacek also shares his knowledge through technical and business publications, focusing on system design, digital transformation, and the evolving integration of hardware and AI.

This initial conversation is focused on understanding your product, technical challenges, and constraints.

No sales pitch - just a practical discussion with experienced engineers.

Share a few details about your product and context. We’ll review the information and suggest the most appropriate next step.